Agentic Infrastructure: History Repeating Itself

TL;DR

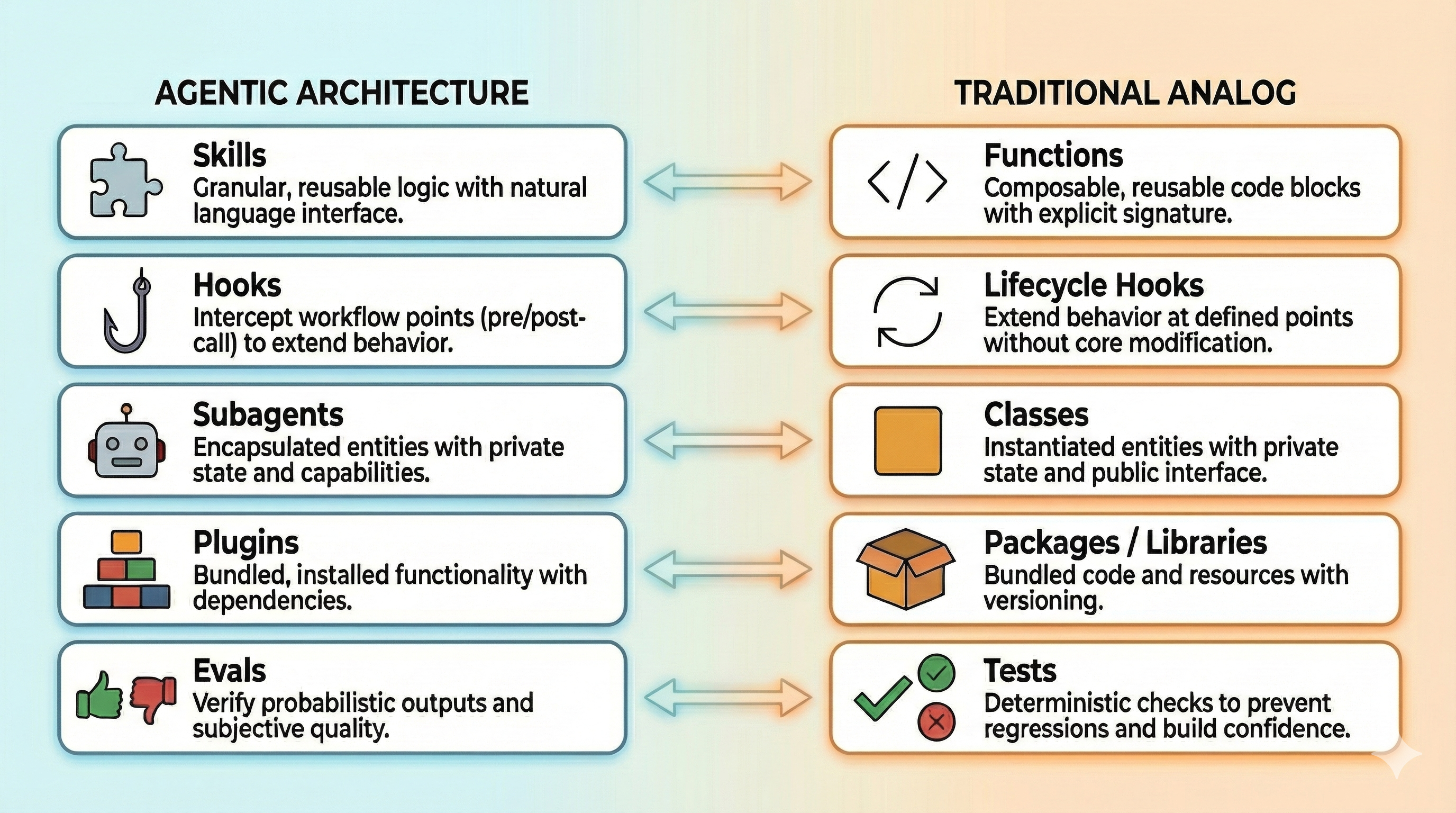

- Skills are like functions, subagents are like classes, plugins are like packages, and evals are like tests

- The key difference is what you optimize for - context management and one-shot accuracy matter more than raw speed

- We're in the early discovery phase, but standardization is coming fast as patterns emerge

- Experienced developers can transfer their intuition - the mental models carry over

Over the past few weeks, I've been digging deep into how Claude Code defines its architecture for agentic workflows. Skills, hooks, subagents, plugins, evals - the ecosystem is growing fast, and at first glance, each piece seems like something entirely new.

But the more I played with how these components integrate, the more familiar it all started to feel.

We've seen this before. We've built this before. The vocabulary is different, but the underlying patterns are the same ones we've been refining for decades in traditional software architecture.

The Mapping

Skills are Functions

Skills are granular pieces of functionality that you can integrate into your agent. The agent calls them when it needs them - just like functions in traditional code. They're composable, reusable, and focused on doing one thing well. The only difference is the interface: natural language instead of explicit type signatures.

Hooks are Lifecycle Hooks

If you've worked with React, this will feel familiar. Hooks let you intercept specific points in the agent's workflow and inject your own functionality. Pre-tool call, post-tool call, on error - these are lifecycle events. The purpose is the same as it's always been: extending behavior at well-defined integration points without modifying the core system.

Subagents are Classes

This one clicked for me recently. Subagents are entities - they encapsulate their own context, they can contain skills and hooks (or not), and they operate as self-contained units.

When you spawn a subagent, you're instantiating a class. It has its own state, its own capabilities, its own lifecycle. The parent agent doesn't reach into its internals; it communicates through a defined interface.

Plugins are Packages

Plugins are collections of functionality you install and integrate - exactly like NPM packages or Python libraries. They bundle related capabilities together, they have their own dependencies, and they extend what your agent can do without you having to build everything from scratch. The ecosystem dynamics are familiar too: some plugins become standards, some are abandoned.

Evals are Tests

If you're building agentic systems without evals, you're shipping code without tests.

I want to be explicit here: evals and tests are not the same thing. Tests are deterministic. Evals deal with probabilistic outputs and subjective quality. The mechanics differ significantly.

But in terms of purpose? They're analogous. Both answer the question: "Does this thing work the way it should?" Both give you confidence before shipping. Both catch regressions. Both require upfront investment that pays off over time.

Where the Analogy Breaks

Traditional developers never had to think about "how much does this function weigh in my working memory?" Agent developers do.

Traditional code optimization is about stability: don't break, handle edge cases, run fast. You spend your time writing defensive code, covering failure modes, and shaving milliseconds off hot paths.

Agentic workflows introduce a different constraint: context management.

Context is finite and expensive. Every token you load into an agent's context window is a token you can't use for something else. Are you loading context efficiently? Is it being cached correctly? Are you paying for the same information twice?

This isn't a concern traditional code has. Memory is cheap. Disk is cheaper. But context windows have hard limits, and tokens cost money.

Anthropic's approach to this is worth studying. Their Agent Skills architecture uses progressive disclosure - loading only skill metadata at startup, then pulling in full instructions only when the agent determines they're relevant. It's context-aware lazy loading.

Speed vs. Accuracy

No matter how fast or scalable the system is, its value is limited by how reliably it produces correct results. Accuracy is the bottleneck.

Traditional code optimization obsesses over milliseconds because users feel latency. Every frame matters. Every API response time gets measured.

Agentic workflows flip this. An agent can now accomplish in one pass what used to take a developer days of manual work. When you're automating complex, multi-step tasks, the raw speed of each individual operation matters less than whether the agent gets it right.

The optimization target shifts from "how fast?" to "how close to one-shotting?" If an agent can complete a task correctly on the first attempt, a few extra seconds of processing time is irrelevant.

Why This Matters

The Learning Curve Isn't as Steep as It Looks

If you already understand functions, classes, and packages, you're not starting from scratch. The mental models transfer. When someone explains that a skill is "like a function the agent can call," you immediately know what that means - scoped inputs, expected outputs, composability. The vocabulary is new, but the concepts aren't.

We Can Avoid Past Mistakes

We over-engineered microservices before we understood the operational costs. We ignored distributed tracing until debugging production issues became impossible. We created dependency hell with NPM and spent years building tools to dig ourselves out.

If agentic infrastructure follows the same trajectory - and it's showing every sign that it will - we don't have to repeat those mistakes. We can build observability in from the start. We can think about skill versioning before we have breaking changes in production. We can design plugin security models before someone ships a malicious package.

We Can Predict What's Coming

If the analogy holds, the tooling roadmap writes itself:

- Skill registries - centralized discovery, like NPM or PyPI

- Agent debuggers - step through agent reasoning like you step through code

- Dependency managers - handle conflicts between plugins, version pinning

- Security scanners - audit skills and plugins for vulnerabilities

- Profilers - understand where agents spend tokens and time

Some of this exists in primitive form today. Most of it doesn't. But if history is any guide, it will.

Best Practices Transfer

Principles we've refined over decades don't disappear just because the medium changed:

- Separation of concerns - keep skills focused on one thing

- Single responsibility - a subagent should have one reason to change

- Composition over inheritance - combine simple skills rather than building monolithic ones

- Don't repeat yourself - extract common patterns into reusable components

We Know Where We Are

Right now, we're in the "early microservices" phase. Everyone's doing it differently. Best practices are tribal knowledge. The tooling is immature. Half the ecosystem will be deprecated in two years.

That's not a criticism - it's just where we are on the curve. The chaos is temporary.

Where I Think This Is Going

Right now, everyone is experimenting. What workflows actually work? How should skills be structured? When do you reach for a subagent versus a hook? There are no established answers yet - just people trying things and sharing what they learn.

This is exactly where microservices were in 2014, where React was in 2015, where containerization was before Kubernetes won. Lots of competing approaches, lots of strong opinions, lots of churn.

But standardization is coming. You can already see the early signs:

- Anthropic publishing Agent Skills as an open standard

- Common patterns emerging in how people structure their

.claudedirectories - Shared vocabularies developing around hooks, skills, and subagents

- Best practices starting to crystallize in blog posts and repos

In the coming weeks and months, expect this to accelerate. The tooling will mature. The sharp edges will get sanded down. The CLI experience will get easier as more tools emerge to bridge the gap between "possible" and "accessible."

The people experimenting now - building skills, writing evals, figuring out what works - are laying the groundwork for everyone else.

What This Means for Builders

Pay attention to what's becoming standard.

As more people use these tools, certain workflows and toolchains will rise to the top. Not because they're theoretically best, but because they work - they're simple, efficient, and require the least configuration to get good results.

That's what everyone is optimizing for right now: best results, simplest path, minimal setup. The patterns that win will be the ones that deliver on that promise.

This won't be fixed. Whatever becomes standard today will evolve as the tooling matures and new capabilities emerge. But in the near term, the opportunity is to watch what's working, learn from the early adopters, and build on patterns that are gaining traction rather than reinventing from scratch.

The builders who thrive will be the ones who recognize the familiar patterns underneath the new vocabulary - and use that recognition to move faster.